Obsidian Portal

Laravel 12 platform on AWS GovCloud powering the VERTEX ecosystem.

Summary

A Laravel 12 platform on AWS GovCloud serving as the centralized backend for VERTEX. Agentic RAG, dual-layer authentication, and multi-tenant isolation.

Problem

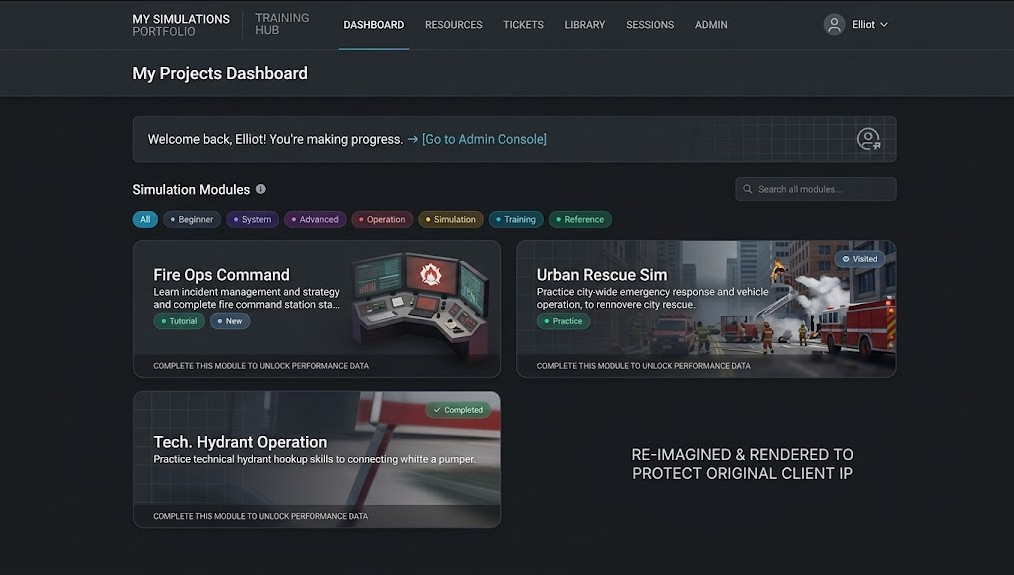

VERTEX needs a backend that holds training scenarios, user accounts, multi-tenant client groups, document corpora for RAG, build artifacts, instructor dashboards, surveys, support tickets, and an LLM tool layer. Most of it runs inside AWS GovCloud at FedRAMP High. The Portal is the centralized backend for all of it.

Architecture

Laravel 12 with Filament admin, Spatie Permission RBAC, and Sanctum dual-auth: cookie sessions for the browser, bearer tokens for Unity, CLI, and native apps. Service-to-service traffic uses scoped app tokens with constant-time comparison. AWS GovCloud infrastructure: RDS with encryption at rest, S3 with SSE-KMS, AWS Bedrock for inference, Polly for neural TTS, Transcribe for STT, SES with SPF, DKIM, and DMARC. The agentic RAG layer gives the LLM tool access; it decides when to search documents, fetch chapters, or generate quizzes.

Outcomes

- AWS GovCloud deployment at FedRAMP High and DoD IL2+

- Filament admin resources across Admin and Developer panels

- Tool registries for both web and game contexts

- End-to-end document RAG: upload, text extraction, chapter detection, semantic chunking, embeddings, hybrid keyword + cosine search

- Comprehensive automated test suite

- Comprehensive developer documentation

Hardest bits

- Browser-based CLI authentication where the CLI initiates a token, the browser handles login, and the CLI receives a Sanctum API token back

- Multi-tenant document scoping where group changes trigger token revocation

- Dual-layer authentication: user-scoped Sanctum tokens plus service-to-service app tokens with per-token tool restrictions

- Agentic loop where the LLM is the orchestrator deciding when to use retrieval tools, with citation injection and per-message logging